Distracted Driver Dataset

Hesham M. Eraqi 1,3,*, Yehya Abouelnaga 2,*, Mohamed H. Saad 3, Mohamed N. Moustafa 1

1 The American University in Cairo

2 Technical University of Munich

3 Valeo Egypt

* Both authors equally contributed to this work.

Institutions

Our work is being used by researches across academia and research labs:

Datasets

| Distracted Driver V1 | Distracted Driver V2 | |

|---|---|---|

| Key Contributions |

|

|

| Dataset Information | 31 drivers | 44 drivers |

| License Agreement | License Agreement V1 | License Agreement V2 |

| Download Link | If you agree with terms and conditions, please fill out the license agreement and send it to: Yehya Abouelnaga: yehya.abouelnaga@tum.de or Hesham M. Eraqi: hesham.eraqi@gmail.com.Upon receiving a filled and signed license agreement, we will send you the dataset and our training/testing splits. | |

| Publication |

|

|

Terms & Conditions

- The dataset is the sole property of the Machine Intelligence group at the American University in Cairo (MI-AUC) and is protected by copyright. The dataset shall remain the exclusive property of the MI-AUC.

- The End User acquires no ownership, rights or title of any kind in all or any parts with regard to the dataset.

- Any commercial use of the dataset is strictly prohibited. Commercial use includes, but is not limited to: Testing commercial systems; Using screenshots of subjects from the dataset in advertisements, Selling data or making any commercial use of the dataset, broadcasting data from the dataset.

- The End User shall not, without prior authorization of the MI-AUC group, transfer in any way, permanently or temporarily, distribute or broadcast all or part of the dataset to third parties.

- The End User shall send all requests for the distribution of the dataset to the MI-AUC group.

- All publications that report on research that uses the dataset should cite our publications.

Description

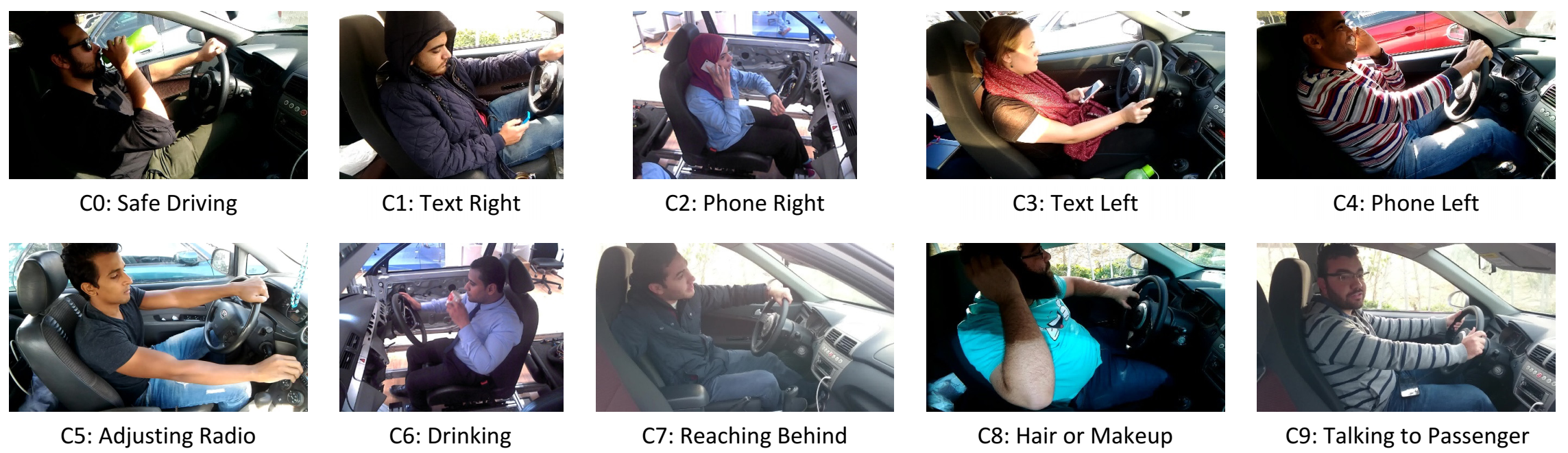

This is the first publicly available dataset for distracted driver detection. We had 44 participants from 7 different countries: Egypt (37), Germany (2), USA (1), Canada (1), Uganda (1), Palestine (1), and Morocco (1). Out of all participants, 29 were males and 15 were females. Some drivers participated in more than one recording session with different time of day, driving conditions, and wearing different clothes. Videos were shot in 5 different cars: Proton Gen2, Mitsubishi Lancer, Nissan Sunny, KIA Carens, and a prototyping car. We extracted 14,478 frames distributed over the following classes: Safe Driving (2,986), Phone Right (1,256), Phone Left (1,320), Text Right (1,718), Text Left (1,124), Adjusting Radio (1,123), Drinking (1,076), Hair or Makeup (1,044), Reaching Behind (1,034), and Talking to Passenger (1,797). The sampling is done manually by inspecting the video files with eye and giving a distraction label for each frame. The transitional actions between each consecutive distraction types are manually removed. The figure below shows samples for the ten classes in our dataset.

Citation

All publications that report on research that use the dataset should cite our work(s):

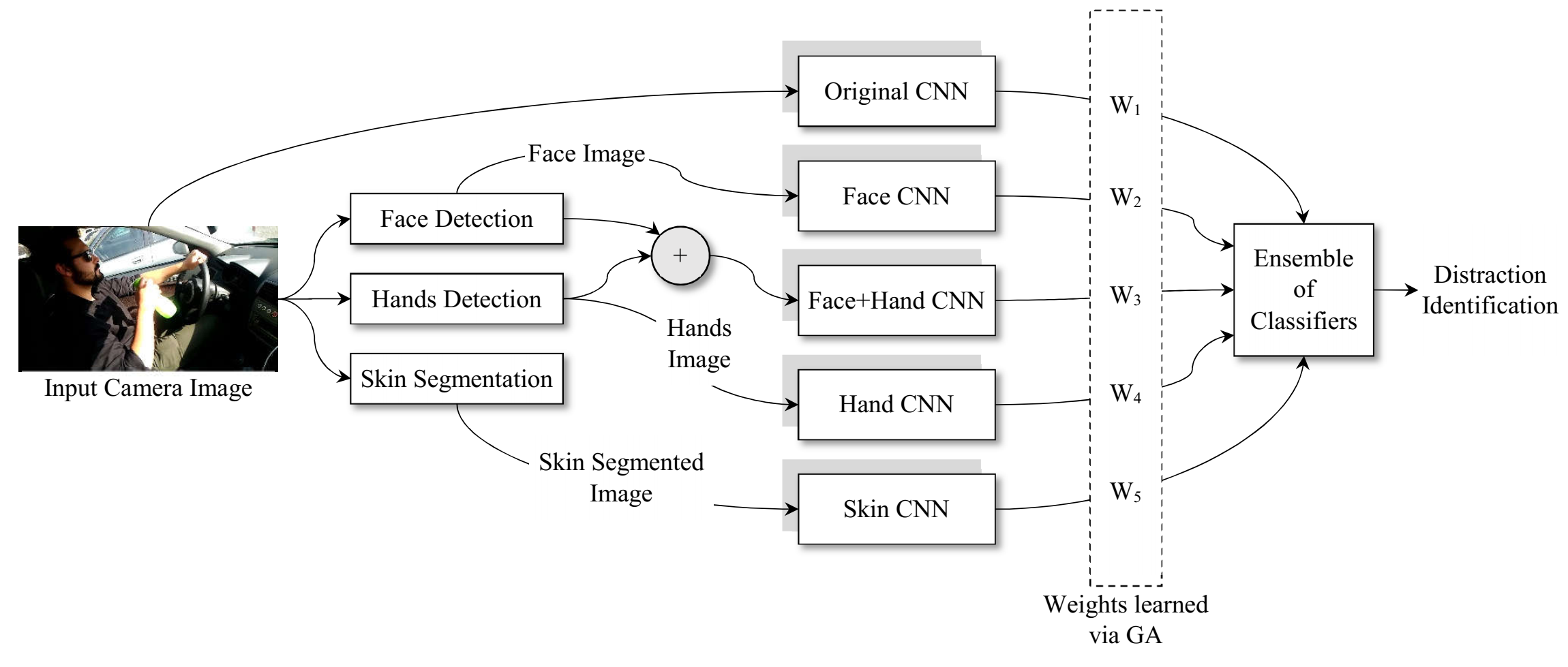

Hesham M. Eraqi, Yehya Abouelnaga, Mohamed H. Saad, Mohamed N. Moustafa, “Driver Distraction Identification with an Ensemble of Convolutional Neural Networks”, Journal of Advanced Transportation, Machine Learning in Transportation (MLT) Issue, 2019.

Yehya Abouelnaga, Hesham M. Eraqi, and Mohamed N. Moustafa, “Real-time Distracted Driver Posture Classification”, Machine Learning for Intelligent Transportation Systems Workshop in the 32nd Conference on Neural Information Processing Systems (NeuroIPS), Montréal, Canada, 2018.